Yesterday a couple of people on the Google Search Quality Team, Juliane Stiller and Kaspar Szymanski, wrote a blog post titled, Dynamic URLs vs. static URLs. In it they address several concepts people in the webmastering community have about whether or not Google has trouble with dynamic urls, and whether or not webmasters should rewrite into what is commonly referred to as “search engine friendly” formats.

Yesterday a couple of people on the Google Search Quality Team, Juliane Stiller and Kaspar Szymanski, wrote a blog post titled, Dynamic URLs vs. static URLs. In it they address several concepts people in the webmastering community have about whether or not Google has trouble with dynamic urls, and whether or not webmasters should rewrite into what is commonly referred to as “search engine friendly” formats.

Their advice, clearly stated in the post, is that webmasters should not attempt to rewrite these urls, and if they do then they should just replace them with static content:

Does that mean I should avoid rewriting dynamic URLs at all? – That’s our recommendation, unless your rewrites are limited to removing unnecessary parameters, or you are very diligent in removing all parameters that could cause problems. If you transform your dynamic URL to make it look static you should be aware that we might not be able to interpret the information correctly in all cases. If you want to serve a static equivalent of your site, you might want to consider transforming the underlying content by serving a replacement which is truly static. – Official Google Webmaster Central Blog

Their logic behind this is that Google has advanced leaps and bounds in the ability to determine what parameters should be in the url and what shouldn’t, and it’s simply safer to let them decide for us what the correct url for a given page should be.

Uh huh.

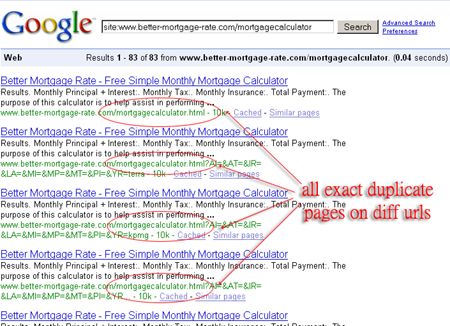

At this point I would like to direct your attention to a blog post I did back in May of this year discussing how Googlebot was creating pages instead of simply indexing them. This is happening because Google is inventing url parameters that shouldn’t be there! They’re still doing it, with the end result being search results that look like this one:

Notice, these are not even dynamic url’s to begin with. They are all exact copies of a static url that Google decided to treat as if it were dynamic, and then decided to index them all as if they were all different pages. At any given time there have been from 80 to 140 of these phantom pages in the index, serving no purpose whatsoever. In fact, I have even seen the fake dynamic versions indexed in place of the genuine page. This is a strong indication that we should in fact not leave it up to Google what parameters mean what. Nuff said on that.

Additionally, in the Google blog post, the team makes this assertion:

4. If you want to serve a static URL instead of a dynamic URL you should create a static equivalent of your content. – Official Google Webmaster Central Blog

Why on Earth would you make that suggestion, and leave the impression that where the content is pulled from has anything whatsoever to do with how well it will get indexed??? That is the most inane thing I have heard come from someone at Google in a very, very long time. Google (and all of the search engines, as a matter of fact, as well as the visitors) have no idea where the content is pulled from. It makes no difference (as in Zero. Zip. Zilch. Nadda. None At All) whether content is pulled from a database or served from a static file. Google can’t tell which is which just by looking at the url. They don’t even bother trying to justify the statement… they just make that claim, knowing full well that people who don’t know any better will take it as gospel. To go and plant the seed in newbie webmaster’s heads that if they are going to be rewriting their urls then they need to be serving content pulled from flat files is beyond pointless… it is actually potentially damaging.

Google makes the claim in the post that Google has “made some progress” in the arena of figuring out how dynamic urls should be treated. The truth of the matter is, webmasters have made much more progress in not having to rely on Google to do that. Most advanced CMS systems today either have built in functionality to allow you to correctly rewrite the urls, plugins that accomplish that, or well written tutorials authored by community members that walk you through the process nicely (and no, you do not need to switch to static pages if you use those rewrites!). Bottom line, my advice is to simply ignore that particular post.

Mike lays the smackdown FTW! Well written, intelligent, and original. Keep up the good stuff man!

My initial impression when reading the Dynamic URLs vs. static URLs post was WTF!

This post is evidence that I was not the only one thinking this.

Great article!

Dude, you can’t talk like that about Google! Google sees everything. You should be afraid,… very afraid.

Oops! Looks like somebody made a faux pas.

I like your post mate. To be honest I’m just confused that Google would choose a series of meaningless characters for the average web user over clear identifiable descriptions on dynamic URL’s – blows my mind!

I thought I was seeing things yesterday when I read the story. I agree with Mike in not leaving it up to Google bot because for years we were told to do the exact opposite.

Mike you are a brave soul….

I am near live on a monster URL re-write project. I explained yesterday to the pointy-hairs and they asked if I could ‘add some gobbledegook parameters back to my nice clean URLs’. LOL.. Maybe Google thinks I should? Thanks Mike for nailing the lunacy of Googles daft pronouncement.

It’s funny, we had this same issue on our site as well. The problem is that the variations of the URL string get indexed because of link popularity. Or at least it’s that way in our case…

Luckily Yahoo has a dynamic parameters tool where you can suggest to yahoo what parameters you use for your query string. I would hope that some day the other two SE’s get the same or similar tool.

We found a work around though that might work for you guys in the meantime… Just hide your variables and pass a plain href to any simple html pass by the bots.

function navigateToGoodLink1()

{

window.location = “http://www.hostilelink1.com/default.aspx?isc=gdppg101&ci=1234

return 0;

}

Good Link

JJ, actually, that’s what URL rewritting solves… it turns parameterized urls into friendly ones. The post that the Google Webmaster Team made told people that they were better off not rewriting their urls.

Contradictory statement is specialist of G. I am not sure but their main intention doing these is confusing people about their algo and strategies.

Also, negative has more importance then positive things does.

-DS

Hey guys, just wanted to point something out as to Google’s philosophy on “easy to read” URLs. First of all, when was the last time you actually typed in a URL past the .com or .net? Most often nowadays people get to deep URLs through either a) bookmarks, b) links from other pages, or most commonly c) Google.

I’m sure you’ve read the articles about people who just type “hotmail.com” into google instead of the address bar. For those users it allows them to type sloppily and still get where they want to go, and the same is true for many web pages. It’s easier to remember the two or three words you used to initially search for a page than its full URL. So what I’m getting at here is that Google benefits from every website having impossible to remember URLs. It means that more people will use Google as their first point of contact for any browsing session, which means more ad revenue for the big guy.

Just something to think about.