On Friday I wrote a piece on how it looked like Google was testing AJAX results in the main serps. Some discussion followed as to whether, if this change were to become a widespread permanent one, this would affect Firefox plugins that existed (definitely some existing ones would stop working), break some of the rank checking tools out there (they would have to be re-written I’m sure), and even some people asking if it would thwart serps scrapers from using serps for auto page generation (not for long, no).

While those things would definitely be affected in at least the short term, there is a much greater impact from Google switching to AJAX. All of the issues mentioned involve a very small subset of the webmastering community. What actually breaks if Google makes this switchover, and is in fact broken during any testing they are doing, is much more widespread. Every single analytics package that currently exists, at least as far as being able to track what keywords were searched on to find your site in Google, would no longer function correctly.

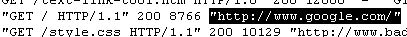

The reason this breaks is core to the way all of the browsers handle referrer strings, which is how the browser tells the web server how it got to your page in those cases where it does send that information. Sending the referrer string is optional, and can even be turned off, although by default it is on in all of the major browsers that exist today. Analytics programs, whether they are log based (programs that go through the server logs reading the referrer strings) or Javascript based (such as Clicky, the built in tracking in MyBlogLog, and Google Analytics) use that referrer string to determine what it was that that someone actually searched on, assuming that they found your site via a search engine, before reaching your site. For Google, they analyze the querystring portion of the referring url, and look for the “q={keyword}” parameter. So for instance, if you were to find the following url in your logs:

![Google search for [troll defense]](/images/google-search-troll-defense.png)

then you would know that someone had gone to Google, searched on [troll defense], and then clicked on the link to your site. If you were running some form of analytics program, that program would then register and track that search for you, so you knew what was and what was not sending you traffic from Google. If you run Google Analytics, you can even hack it to get the full referring url and get even more information from the referral.

However, if Google switches over to AJAX none of this will be possible. Unfortunately this isn’t even something that could be accomplished with a simple recoding of the analytics packages either… it would require completely rewriting the browsers themselves in order to track referrals from the new Google AJAX searches. The new AJAX url, as I mentioned in the earlier post, is driven by parameters that come after the hash mark (the number or pound sign in the url). Browsers do not include that data in the referrer string, and it is never sent to the server. Therefore, all referrals from a Google AJAX driven search currently make it look as if you are getting traffic from Google’s homepage itself. Now, while this kind of information showing up in your tracking programs might be quite a boost to the ego if you don’t know any better, and will work wonders for picking up women in bars (“guess who links to me from their homepage, baby!”), for actual keyword tracking it is of course utterly useless.

On Friday I set up a couple of tests to demonstrate this, so I could show the results in the server logs, MyBlogLog tracking, and even in Google Analytics itself. I found a phrase that I was ranking for, that I knew that no one was searching on, [bad neighborhood vandemar], and sent myself some referrals. In every case the method I checked showed Google.com itself as the referring site. You can see this in the server web traffic log:

in MyBlogLog stats reporting:

and even in Google Analytics:

I don’t know if Google has considered these ramifications in making this switch over, or if this testing is something that they are actually interesting in pursuing. I don’t know why they would be testing a change of this magnitude in the first place, though, unless there was a good chance that they would eventually go live with it.

Good analysis!

Currently, it’s anybody’s guess whether Google actually *wants* to downgrade referrer data and devalue it for stats analysis purposes – it would make eminent sense, however, in that it helps them regain greater control over their data, makes live more difficult for SEOs, etc. etc. As behooves a major data mining outfit…

(Obviously, it will also push SERP scraping to the next level by way of the SEO community’s working around this issue, but that’s another can of worms in its own right.)

Michael,

Will it affect analytics programs that use javascript code on each page rather than server logs?

From what you’re saying, it sounds like more of a log file problem (which I understand many analytics programs use).

This is perfectly in line with what I would expect, although I haven’t thought it through very far yet. Why should Google want anything other than Google.com in your traffic logs? Google.com gets credit for the traffic, and leaks nothing else. Perfect.

I completely expect more of this from Google over time. If UI advances enable a perfect excuse to deny referrer data, then that’s kismet for the big G. They get to claim it’s progressive and poo-poo any claims that they are taking steps to preserve their power over webmasters.

Coming soon – want to see referrers? Sign up for Google Analytics, and maybe someday pay a fee. Why not? I have yet to see Google acknowledge it gets any value at all from smart webmasters. On the contrary, the dumber the better for Google.

what the heck is that avatar you assigned? It’s enough to prevent commenting, for sure.

A change like this, especially if there is no work around, should certainly be done with plenty of warning as web analytics vendors would be screwed & all their customers as well. This is a big deal.

Jill, as I explained in the post, and showed graphics for, it definitely affects Javascript based tracking as well.

Great post Michael. This would be completely devastating to not just webmasters but marketing people and everyone who relies on referring phrase analysis. Not knowing how your traffic came to you does 3 things:

1) Reduces your knowledge about your audience

2) Reduces your knowledge about how your site performs

3) Reduces your ability to perform any fundamental SEO related tasks.

I can only imagine that this was some sort of test.

If Google Analytics became the only way of getting referrer strings, that becomes an abuse of a near-monopoly. The European Union will come to our rescue. In about 3-5 years’ time. Let’s hope it doesn’t come to that.

This is huge for other analysis software.

Will Google tweak its very own Analytics script to be the only script to show detailed google referrals.

Increasing Market Share baby!

Of course, what’s not being analyzed here (no criticism intended: being beyond the scope of this post, I didn’t really expect it anyway) is the fact that Ajax will allow Google to track just about every mouse movement and navigational action by every user hitting their pages – this is breathtakingly invaluable data: for Google the data mining behemoth to laser target their advertising, but equally so for the intelligence and law enforcement agencies (think: tracking user behavior to IPs, geo location etc.!) – this is a very very sweet fruit, hanging very very low for them, so why should they want to resist that temptation? Unless someone/something actually forces them to abandon it…

@fantomaster – actually, they can get that data now by using AJAX if they wanted, without actually changing the serps work. I’m not sure how much extra data they would get by tracking something other than actual clicks though.

@Jonathan Beeston – just to clarify, this only affects traffic coming from Google itself. How Google delivers it’s serps has no affect on how tracking software works with other search engines.

True, they don’t need to truncate their referrers for that. Well, if you do it the way ClickTale does, it’s a LOAD of behavioral data you can get from that – clicks, true: but what kind, when, where, frequency. And tie this in with geo location, IP, browser history etc. and what you have is as good and granular an individual profile as you can wish for at the current state of technology. Quite scary, really.

If Google Analytics does implement some way of picking up the search term from an AJAX-based search, surely this method would have to be open to any analytics package? As the data must be sent to the site visited from the SERPs to be picked up by the individual site in any way at all.

That’s not actually true. They already track clicks and the destinations of them on their end. They could easily use that tracking data in Google Analytics if the destination site is utilizing it, and track everything behind the scenes if they wanted to. Nothing would have to get sent to the browser. It would almost be like tracking clicks inside your own site.

I think Jill’s right. Javascript tracking can easily get around this. You show a Google Analytics example, but that’s just how GA must be set up. I use Yahoo Analytics and I am fairly sure I can see stuff after the # and what’s more, we can set up rules and reports based around these parameters. I don’t need the referral string, because the data comes into the landing page URL, which is great for me, because there are less false positives.

I also agree that Google can already track mouse movements if they want… and I don’t think they even need Ajax do they? Crazyegg uses javascript to track mouse movement… snippet of code into any page and bingo… everyone’s mouse movement is tracked, heat maps and everything.

Dixon (Receptional)

Dixon, unless you are talking about specific tracking url’s that you set up for PPC tracking, then the only url’s with the query that was searched on is in the referring url from the search engine.

Aaaah. I see your point. Google could choose to add parameters that would allow us to track, but probably wouldn’t by default – not least because some websites would break if they did.

I just hope they don’t push this on their production systems. As you’ve said it’s still in the testing phase and is not yet in their mainstream. But if they do, it would seem to me as a violation of their “Don’t be evil” principle if they don’t give a transition period for most tools to migrate to what they want to achieve.

Also, I am for this if they could prove it beneficial to the whole internet and not just to perpetuate their own products and services and become a monopoly again.

@Ismael – This is on production servers. I’m not hitting a test server… I am hitting a live server that they are doing testing on. It’s just not on all of the servers, nor do we know if it ever will be. They perform tests on live servers all of the time. They have to. With a platform like theirs, the only meaningful tests would be on a sampling of live traffic.

Aha, I was seeing hundreds of these empty google referers today and wondered what was going on.

In the past a google referer with nothing after it was either referer_spam of some sort (which is what I thought this was) or else it was the referer I got when someone clicked the “I’m Feeling Lucky” button.

Google can do whatever they want but this effectively severs a vital part of the relationship between what they do and what the rest of us do.

The only upside I see to this is that the Ajax-powered search seems faster than the non-Ajax version.

Whoa. Back the click tracking bus up a bit. What did Google recently release? Their very own browser. Now they are messing around with the SERPs so that browsers can’t tell where clicks came from. Want to bet that 1 browser CAN tell? I bet they have something in THEIR browser that reads something other than referrer to tell where exactly the traffic came from. Google may be many things, but stupid is not one of them. If Ajax breaks analytics, then it is bad for them too. So before they introduce something that would damage themself, you can bet they already have the fix in for their products.

@Don – the version of Chrome that I tested, which is 0.2.149.27 and based on AppleWebKit/525.13, does not in fact pass the fragment.

If someone with a newer version of Chrome could test and let us know for sure, that would be great. You can do this by picking a query that you know a site you have access to the traffic logs of ranks for, manually constructing the query with a fragment on the end, when your site comes up clicking on it in the results, and then downloading the logs, opening them in a text editor (I use NoteTab Light), and looking for your click. When I looked there was definitely no fragment, using the following query:

http://www.google.com/search?q=endless+poetry#lookforthisfragment

and I got this in my logs:

xxx.xxx.xxx.xxx – – [03/Feb/2009:16:36:34 -0600] “GET / HTTP/1.1” 200 6925 “http://www.google.com/search?q=endless+poetry” “Mozilla/5.0 (Windows; U; Windows NT 5.0; en-US) AppleWebKit/525.13 (KHTML, like Gecko) Chrome/0.2.149.27 Safari/525.13”

So, as you can see, no hash mark bit in there, so nothing for Analytics to grab.

If you haven’t seen the statement on Search Engine Land, here’s what it said:

“We’re continually testing new interfaces and features to enhance the user experience. We are currently experimenting with a javascript enhanced result page because we believe that it may ultimately provide a faster experience for our users. At this time only a small percentage of users will see this experiment. It is not our intention to disrupt referrer tracking, and we are continuing to iterate on this project. For more information on the experiments that we run on Google search, please see: http://googleblog.blogspot.com/2006/04/this-is-test-this-is-only-test.html.”

@Matt – Thanks for chiming in. I did see that quote earlier on SEL, thank you. As I mentioned in closing, obviously only Google knows what their plans are for this feature. You guys do in fact sometimes go live with things that you tested though, so this is definitely worth keeping an eye on, wouldn’t you agree? Especially if it winds up being a change that affects things so that only Google Analytics can track keywords used in Google referrers.

I know though, often times you guys do test things that never happen, like the plethora of test icons that never went live:

http://www.techcrunch.com/2009/01/09/google-gets-a-new-favicon-again-its-uh-colorful/

@Matt many of us do not read Search Engine Land since it doesn’t represent us well, so thanks for chiming in here (where the issue was raised a few days before SEL republished it).

I totally agree with Google the editorial adds significant value to the reader, hence my hopes that you’ll continue the hard work of participating in the web and not just post to the best-funded aggregator.

“parameters that come after the hash mark (the number or pound sign in the url). Browsers do not include that data in the referrer string, and it is never sent to the server.”

Um, my Sitemeter logs show me, under visitor details, when someone completes a Survey Gizmo form. The end url is something like seoroi.com/contact#sgwrap . So couldn’t log powered analytics get this data? Maybe I’m missing something, since I can’t code like you?..

Gab, that’s your landing page, not the referring page. Unless you are showing only the very tail end of the url? Can you copy and paste an example?

Well, I don’t think this is ever gonna happen. All weblog parsers will be affected. Why I say all, because Urchin is one of them. So I don’t think Google will ever switch to a search that will break their own software. And it’s not a thing that they can fix from Urchin. As you’ve underlined, the browsers are the problem, which Google has no control over.

Bottom line is this: don’t worry, it will NEVER happen.

“it would require completely rewriting the browsers themselves in order to track referrals from the new Google AJAX searches”

Does Google’s Chrome browser handle this differently? Is Chrome already capable of tracking referals from the Google Ajax search?

Well, even if Google Chrome would know to send the whole string with the hash mark, probably most web server would ignore it, because they would interpret it as an anchor. I have tested by programatically, by sending a request containing the hash mark, and the server totally ignored what was after the hash mark.

Doesn’t Google Analytics use JS as opposed to server data though ..?

Yeah, it does, but so do the other analytics. The thing is that using JavaScript limits the accuracy of the web parser analytics, including Urchin, which is a Google brand now. So, to switch to hash mark separated query, it would be like kicking themselves in the behind. Google Analytics is fine for small websites, and people how don’t do very detailed analysis of the incoming traffic. You can’t track direct downloads, which is very important for someone who develops products. So the big business comes from Urchin, on the analytics side of Google. Since this hash mark it is not an analytics software related problem, they cannot fix it just in Urchin. There are also another number of things that GA cannot do. Stuff like, spider/crawler tracking which is also important, or bandwidth usage.

Actually, if you read my post I explained… it has nothing to do with whether the analytics program uses Javascript or is server log based. Both are affected equally, as it is an issue with how the browser passes the referrer string.

Michael, that was not what I meant. Google can do something like send another header containing the actual referrer URL with query and all, and then using the Urchin analytics js code to retrieve that header value from the HTTP request. In that case the js problem would be fixed. Something like: if the request contains the “GoogleReferrerURL” header, then it comes from Google and this is the actual referrer. Also, I think they can fix this problem using cookies. My guess is these are not the only ways to trick the webserver but not the javascript. But as I said, they have nothing to gain from that. Only a lot to lose.

Hey Michael.

Not sure if your comment (#43) was for me or Christi (#42).

I’ve been reading several posts on this issue, which is not hard considering the coverage it’s getting. But that is my point exactly – if the issue is browser-orientated, is Chrome (Google’s own browser) capable of handling it..?

Cause if Chrome can handle it, and one is using Google’s ga.js, then there won’t be a break, will there ..?

I am well aware of Chrome’s very minimal market share, but that’s not my point. I’m just looking for a conspiracy theory, and to know if Google is setting up webmasters to use gAnalytics exclusively, and to push/promote/force Chrome as a recommended browser. It might sound absurd, but so did 2 kids in a garage setting up a “Do No Evil” Empire that we’re all now so reliant on.

Cristi, that’s not at all how it works. Google has absolutely no control over the headers that the browser sends when it visits a website. Yes, they could track it by cookies, but they don’t need to, since they already have that information. They could just use cross-reference what they collected on the search end, including the click through to the website… but again, that leaves us with Google Analytics then being the only stats package that can track the data.

The version of Chrome that I tested did not pass the information after the hash mark. None of the major browsers did. There is only one I saw so far that does pass it, and that is the iPhone.

Michael, you can use javascript to proxy all anchor hrefs trough a javascript function. When you compose that request you can add anything header you want to it. See for yourself http://www.devx.com/DevX/Tip/17500

After that, all that’s left to do is interpret that header from the script installed on your site.

To complete my last post… To proxy the a href trough a method you can do it like this:

var listOfAs = document.getElementsByTagName(“a”);

for (var i = 0; i < listOfAs.length;i++)

{

var oldHref = listOfAs[i].href.toString();

listOfAs[i].href = ‘javascript:proxyFunction(“‘ + oldHref + ‘”);return false;’;

}

And that is it. Do whatever you need in proxyFunction

Christi, the link you posted is for retrieving XML documents for use in AJAX, and has nothing whatsoever to do with navigating from a page on one site to another. You are also confusing what is sent to the server with what a client side script is able to interpret. No offense, but you are mixing technologies and concepts, and getting them wrong in doing so.

I don’t really want to get into a long detailed technical discussion in the comments here about how Google could fix this issue if they decided to go live with these changes. Please feel free to post a full solution if you think you have one elsewhere though, and then link to that from here if you like.